Contra Carol Cleland: IRL Scientists Really Do Adhere To Karl Popper's Falsification

... and also Cleland's "false-positive/false-negative minimization"; and also Bayesianism; there are many mental models that adequately describe scientists' in-real-life behavior

I.

I recently read an interesting paper by Carol Cleland, “Historical science, experimental science, and the scientific method”, originally published in Geology; November 2001; v. 29; no. 11; p. 987–990. The abstract reads:

Many scientists believe that there is a uniform, interdisciplinary method for the practice of good science. The paradigmatic examples, however, are drawn from classical experimental science. Insofar as historical hypotheses cannot be tested in controlled laboratory settings, historical research is sometimes said to be inferior to experimental research. Using examples from diverse historical disciplines, this paper demonstrates that such claims are misguided. First, the reputed superiority of experimental research is based upon accounts of scientific methodology (Baconian inductivism or falsificationism) that are deeply flawed, both logically and as accounts of the actual practices of scientists. Second, although there are fundamental differences in methodology between experimental scientists and historical scientists, they are keyed to a pervasive feature of nature, a time asymmetry of causation. As a consequence, the claim that historical science is methodologically inferior to experimental science cannot be sustained.

She has a lot of good insights in there, and presented ideas that were novel to me, but “obvious in hindsight”. That said, I think she is being too harsh on falsificationism and overestimates the degree to which “real science” deviates from it. To give a bit of background context around why she wrote the article, Henry Gee, an editor of Nature (i.e. someone important in the scientific community) basically said experimental sciences rule and historical sciences drool1, so Cleland rebutted. The outline of her argument as I understand it (although I’m emphasizing different aspects of it than she did), is:

Gee claims that experimental science is “real science” because it relies on falsification, whereas historical science doesn’t/can’t rely on falsification and thus isn’t “real science”.

Cleland “proves” that actually, experimental science doesn’t rely on falsification (we’ll go through her arguments in a moment).

Therefore, experimental science is no better than historical science (and implicitly, both are “real science”).

Corollary: Falsificationism is not a good criteria for deciding what constitutes real science.

I’m not particularly interested in whether experimental sciences is “better” than historical sciences (I think the whole argument is pretty silly, like asking whether ice cream or the Pythagorean theorem is better2), so I won’t bother addressing any of that aspect of Cleland’s arguments. Instead, I’m purely focusing on her claim that “real scientists” don’t actually follow falsificationism.

II.

By “falsificationism”, we are referring to an idea from philosopher Karl Popper, who was trying to draw a line between what is and isn’t science. Popper argues that a core component of science is to propose falsifiable hypotheses, and then to conduct experiments that have a risk of falsifying those hypotheses. If the experimental results do not match what the hypothesis predicted, then you “must reject” the hypothesis.3

Cleland argues that in real life, scientists don’t actually adhere to falsificationism. She provides an interesting example:

the response of nineteenth century astronomers to the perturbations in the orbit of Uranus; the orbit deviated from what was predicted by Newtonian celestial mechanics. Astronomers didn't behave like good falsificationists and reject Newton's theory: they rejected the assumption that there were no planets beyond Uranus, and discovered the planet Neptune. The moral of this story is that rejecting a hypothesis in the face of a failed prediction is sometimes the wrong thing to do

It’s actually relatively easy to “rescue” falsification here: The hypothesis that the astronomers were testing was not merely “Newtonian mechanics is right” but rather “Newtonian mechanics is right, and also we know of all the significant masses (e.g. planets) in our solar system.”4

Cleland is aware of this objection, and calls the thing I tacked on an “auxiliary assumption”. I’ll give her response to my objection, but I need to provide some background context on the terminology used. When she says “C”, she is referring a “condition”. And by “condition”, she means the thing you artificially produce in a lab to test your hypothesis. For example, if your hypothesis is “Copper expands when heated”, then your “condition” might be heating a piece of copper. When she uses the term “risky”, she’s referring to Popper’s terminology, where a test is “risky” if it has a chance of falsifying the hypothesis. In other words, non-risky tests (tests which had no real chance of falsifying the hypothesis) should not be considered as evidence supporting the hypothesis. So with those definitions in mind, here is Cleland’s response to my “rescued falsification”:

[The real world behavior of scientists] resembles the activity condemned by Popper, namely, an ad hoc attempt to save a hypothesis from refutation by denying an auxiliary assumption. However, there is an alternative interpretation: it may be viewed as an attempt to protect the hypothesis from misleading disconfirmations. It is significant that the same process of holding C constant while varying auxiliary conditions also occurs upon a successful test of a hypothesis. Moreover, C itself may be removed for the purpose of determining whether it was required for the successful result. While these responses to successful tests superficially resemble attempts at falsification, a little reflection reveals that this can't be what is going on, because they do not conform to Popper's requirement that the tests performed by “risky.” The hypothesis has survived similar tests, and no one expects it to fail this time. Even if it does, it won't automatically be rejected. Viewed from this perspective, the activity more closely resembles an attempt to protect the hypothesis from misleading confirmations. In other words, a close look at the work of experimental scientists suggests that they are primarily concerned with protecting their hypotheses against false negatives and false positives, as opposed to ruthlessly attempting to falsify them. This makes good sense because, as discussed earlier, any actual test of a hypothesis involves many auxiliary conditions that may affect the outcome of the experiment independently of the truth of the hypothesis.

I agree with Cleland that (good) scientists “are primarily concerned with protecting their hypotheses against false negatives and false positives”, or more generally, they’re interested in acquiring true beliefs about the universe. I think her mental model (we might call it “False-Negative/False-Positive minimization” or FNFP-min for short) for what scientists do is an adequate description of reality. But I disagree with her that falsificationism is an inadequate model. I think they’re both fine, as long as you acknowledge that “in real life”, scientists are evaluating many (possibly infinitely many) hypotheses simultaneously (or less charitably, that the mental states of the scientists are such that the hypothesis being tested isn’t always explicitly and unambiguously defined). Let me give a walk through of how my mental model for falsificationism would explain the behavior of the Neptune example she gave earlier.

The scientists in question have essentially an infinite number of beliefs (or what Cleland might call “auxiliary conditions”). For example, they believe that 1+1=2, or that light travels at such-and-such a speed, or that “there is such a thing as light”, or that “when I see X, this implies a causal history where some non-zero number of photons bounced off of X and then traveled to my eye”, and so on.

So when a scientist does an experiment like “Let’s measure the position of Neptune”, they’re testing the hypothesis “Newtonian mechanics is true, and also all of my other beliefs are true.” And when Neptune doesn’t show up at the expected location, they do indeed reject this hypothesis.

At that point, a near infinite number of new hypothesis come rushing in to fill the newly created vacuum:

Maybe Newtonian mechanics is wrong.

Maybe my belief that I know about all of the planets in the solar system is wrong.

Maybe my belief that I know how light works is wrong (e.g. I thought that because I had experienced such-and-such visual stimuli, this meant that Neptune was here; but what if I could get such-and-such visual stimuli while Neptune was actually there instead?)

Maybe the universe works in such a way that 1+1=2 in most cases, but specifically when trying to calculate the position of Neptune, 1+1 equals something else.

Etc.

The scientists would then rank these new hypotheses by plausibility (e.g. by Bayesian reasoning, or just subjectively, it doesn’t really matter), which then informs them of which new tests and experiments to run.

In some cases, this “hypothesis, experiment, reject, new hypothesis” cycle can happen very quickly—so quickly that it might be hard to even notice that it’s happening (which might lead you to falsely conclude that it’s not happening and that the scientist is therefore not following the Popperian falsification methodology):

You hypothesize that copper expands when heated (and also that all your other beliefs are true).

You perform an experiment where you heat the metal.

The copper does not appear to expand.

Within milliseconds, you think “wait, is the heater actually working?” this implies (at least) two new hypothesis that you on some level know, but you came up with them so quickly you didn’t even have a chance to formulate them into explicit words in your mind: “Perhaps this piece of copper is very hot, but my beliefs that copper heats when expanded is false” and “Perhaps the piece of copper is not very hot, because something went wrong with my heating equipment.”

Within milliseconds, you devise an experiment that can distinguish between these two hypotheses: Quickly and gingerly tap the piece of copper with your finger and see if it feels very hot. You want to tap it quickly so that if it indeed is very hot, you wouldn’t have touched it long enough to burn yourself.

You conduct the experiment and tap the piece of copper, feeling no heat. Maybe this takes a few seconds.

Within milliseconds, you come up with a new hypotheses: “Maybe the copper is hot, but not very hot, and it would take longer contact with the metal to experience the heat. Maybe that level of heat is not low enough to cause a measurable expansion. Maybe I had a wrong expectation for how long it takes to heat a piece of copper or how powerful my heater is, or maybe the heater is just defective or maybe I’ve set it up incorrectly or don’t know how to use it.” vs “Maybe the heater is completely broken and the copper hasn’t been heated at all.”

Again, within milliseconds, you devise a new experiment: Put your finger on the piece of copper for a longer period of time (a couple of seconds), to see if you can detect subtle heat on it.

etc.

I think this is a realistic description of how a scientist performing an (admittedly fairly elementary) experiment (perhaps high school students trying out practical experiments for the first time) would behave in real life.

Yes, their behavior can be adequately modeled by Cleland’s FNFP-min mental model (they’re “refusing” to reject their initial hypothesis before they’ve eliminated the false negative possibility that some equipment failure occurred). But it can also be described by Popperian falsification just as well: they did in fact reject their initial hypothesis, and then generated new hypotheses, and then tested those, and rejected them as well, and then generated yet even more hypotheses, etc.

III.

Cleland also argues that “removing C” demonstrates that the scientists are not following Popperian falsification, but again I think her reasoning is fallacious here. The example scenario is now: the scientist successfully heated the piece of copper and observed that it expanded. They then “don’t heat the copper” (i.e. they simply “do nothing”) and check whether the copper would have expanded anyway.

In the FNFP-min model, we might say that the scientist is not satisfied that they have gathered enough evidence to conclude that heat causes copper to expand, and so they are now trying to minimize false positives. Fine, Cleland’s FNFP-min model can work to describe the scientist.

But the scientist’s behavior can also be described just fine under the Popperian falsification model: Since the copper did indeed expand when heated, we “do not reject” the initial hypothesis. But notice that under the Popperian paradigm, we don’t conclude “therefore heat causes copper to expand”, because under this paradigm, an experimental result can never prove its corresponding hypothesis to be true. Instead, we move on to a new hypothesis: “maybe copper expands no matter whether it’s heated or not”, and then we conduct a new experiment to try to falsify this new hypothesis.

We essentially have a hypothesis that “seems” to be true: the hypothesis “copper expands when heated” seems to be true because when we conduct an experiment where we heat copper, the copper (usually) expands. In Cleland’s FNFP-min model, we want to become more and more confident it’s true by gradually reducing the false-negatives (explaining away all the times the copper didn’t expand by, e.g., experimental error) and reducing the false positives (making sure that the link between heating and expansion is truly a causal one). In the Popperian falsification model, we want to become more and more confident that our hypothesis is true by falsifying any competing or overlapping hypothesis.

Of course, an important question remains: All models are wrong, but some models are useful; so is my mental model “more useful” than Cleland’s? I don’t know, I feel like they’re pretty equivalent. One way in which my model is weaker than Cleland’s is that under my model, scientists are quickly and subconsciously churning through dozens if not hundreds of hypotheses during every experiment they conduct, and so when they try to formally and explicitly state what “the” hypothesis it is that they’re testing (e.g. in the associated paper report that they hope to publish), we, the scientific community, all secretly know this is a gross oversimplification. Cleland’s model avoids us having to tell this particular “noble lie”. On the other hand, one way in which my model is stronger than Cleland is that there is a widespread existing belief in Academia that scientists do indeed follow Popperian falsification. Under my model, this belief is literally true, and under Cleland’s model, that is the noble lie.

IV.

But, plot twist, I actually think both FNFP-min and Popperian falsification are noble lies we might want to teach to kids to keep things simple for pedagogical purposes, and that “real science” is more complicated.

Cleland presents the following example about the extinction of dinosaurs to argue why falsification just doesn’t make a lot of sense in historical sciences:

there is little in the evaluation of historical hypotheses that resembles what is prescribed by falsificationism. [...] As geologist T.C. Chamberlin (1897) noted, good historical researchers focus on formulating multiple competing (versus single) hypotheses. Chamberlin's attitude toward the testing of these hypotheses was falsificationist in spirit; each hypothesis was to be independently subjected to severe tests, with the hope that some would survive. A look at the actual practices of historical researchers, however, reveals that the main emphasis is on finding positive evidence--a smoking gun. A smoking gun is a trace that picks out one of the competing hypotheses as providing a better causal explanation for the currently available traces than the others.

The meteorite-impact hypothesis for the extinction of the dinosaurs provides a good illustration (Alvarez et al., 1980). Prior to 1980 there were many different explanations for the demise of the dinosaurs, including disease, climate change, volcanism, and meteorite impact. The discovery of extensive deposits of iridium in the K-T boundary focused attention on the impact of a meteor; iridium is rare at Earth's surface, but high concentrations exist in Earth's interior and in meteors. The subsequent discovery of shocked quartz in the K-T boundary cinched the case for the impact of a large meteorite, because there was no known volcanic mechanism for producing that much shocked quartz. The causal connection between the impact and the extinction, however, required a bit more work (Clemens et al., 1981). It wasn't until it became clear that the dinosaurs had died out fairly quickly around the time of the impact that the iridium and shocked quartz took on the character of a "smoking gun" for the meteorite-impact hypothesis. In short, of the available hypotheses and in light of the existing evidence (e.g., fossil record, iridium, shocked quartz, crater), the meteorite-impact hypothesis supplied the most plausible causal mechanism for understanding the demise of the dinosaurs.

I’m not sure if Cleland intended this to be an argument in favor of FNFP-min over Popperian falsification. If so, I’m not convinced. It seems like the Popperian falsification describes the above behavior just fine—at least in the form that I’ve described, where I allow for many different hypotheses to exist in the scientist’s mind simultaneously (sometimes in implicit/subconscious form), and they try to falsify as many as possible.

However, I think an even better mental model is Bayesianism, though to be frank, that might be a little bit aspirational.

In the Popperian falsification mental model, we have hypotheses. Some of these hypotheses end up being falsified, in which case we might say they have probability P=0 of being true.5 For the ones that haven’t been falsified, I don’t think the Popperian model really provides any principled way to assign a probability value of them being true. We might be tempted to say “all surviving hypotheses have a uniform probability of being true” or something along those lines, but “probably” there are an infinite number of surviving hypotheses, and so when you divide it out, they all get P=0 anyway.

In the Cleland FNFP-min mental model, we have some uncertainty about every hypothesis, but I don’t believe it provides a way to assign probability values to these hypotheses. That is, for any particular hypothesis, we will have had some supporting evidence (but maybe some of them are false positives) and some contradicting evidence (but maybe some of them are false negatives)—but this is all qualitative descriptions rather than quantitative probabilities.

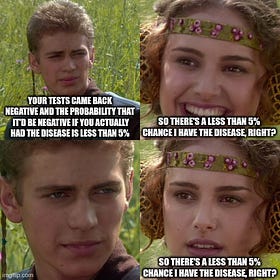

In contrast, in “real science” we “almost” talk about the probability of a hypothesis being true. And by that, I mean lots of scientific papers will include a p-value, which is one of the three values needed to calculate the probability that the hypothesis is true. I’ve previously wrote a post about calculating probabilities from p-values (and two other values) here:

In practice, in so far that someone can be said to be practicing Bayesianism, they are likely to be practicing it “qualitatively”. That is to say, they usually do not explicitly do numerical calculations in their head to update their probability-beliefs. Rather, when they encounter new evidence, they “informally intend” to increase or decrease their belief in a particular hypothesis. This means their posterior belief (the probability they think their hypothesis is true after seeing the evidence) is at least related to their prior belief (the probability they thought their hypothesis was true before seeing the evidence), which is already an improvement over how people instinctively reason probabilistically.

It’s in this sense that many “real” scientists are Bayesian: They have a set of competing hypotheses (for why the dinosaurs went extinct, for example). As new evidence comes in, they “informally intend to update” their probabilities. Cleland talks about a “smoking gun”, but this is mainly an artifact due to the fact that currently most scientists are only doing Bayesianism informally and qualitatively: A smoking gun means that one of the hypotheses seems so significantly likely relative to all the others, that you are able to pick out this difference in magnitude despite not having formally tracked any numbers. In principle, scientists could (and should aspire to) perform more formal quantitative Bayesianism, which would allow a tentative consensus to form around one (or a set of) “preferred” hypothesis whose probability was calculated to be higher than the others.

Instead, today we have, for example, multiple competing hypotheses for the unification of quantum physics and relativity, which each individual physicists having their own subjective and incommensurable opinion about which hypothesis is the most likely, mostly based on vibes and priors. It’s not quite Popper, not quite Cleland, not quite Bayes; but of the three, I believe Bayes is closest. And if we dare to be idealists, I believe moving the in-practice process to be more Bayesian (as opposed to more Popperian or more Clelandian) would be good for science overall.

I’m paraphrasing, based on Carol Cleland and Massimo Pigliucci’s portrayal of Henry Gee’s stance. I haven’t directly read Henry Gee’s writing.

Basically, they’re two different things that likely would be used in different situations. Perhaps a less dismissive analogy would be saying that they’re like arithmetic and calculus: if you’re in a situation where you can use arithmetic/experimental science, you should probably do so, because it’s easier than calculus/historical science, and so you’re less likely to make mistakes when deriving your results. But when you’re in a situation where you have to use calculus/historical science, then tautologically, you have to use it.

This is (almost certainly) an oversimplification of Popper true stance. I haven’t directly read Popper’s writing, but I’ve read summaries of it. For example, https://plato.stanford.edu/entries/popper/ states:

Popper draws a clear distinction between the logic of falsifiability and its applied methodology. The logic of his theory is utterly simple: a universal statement is falsified by a single genuine counter-instance. Methodologically, however, the situation is complex: decisions about whether to accept an apparently falsifying observation as an actual falsification can be problematic, as observational bias and measurement error, for example, can yield results which are only apparently incompatible with the theory under scrutiny.

Thus, while advocating falsifiability as the criterion of demarcation for science, Popper explicitly allows for the fact that in practice a single conflicting or counter-instance is never sufficient methodologically for falsification, and that scientific theories are often retained even though much of the available evidence conflicts with them, or is anomalous with respect to them.

Cleland’s article also claims that you must reject a hypothesis given even a single contradictory observation, and so I will also “act as if” that were Popper’s stance. So any time you see either of us refer to Popper, imagine we’re referring to StrawPopper, a straw man version of Popper, instead. The core of my argument isn't merely "Scientists follow the practices that the real Popper advocates for"; I'm making the stronger claim that "Scientists even follow the practice that Cleland’s straw man version of Popper advocates for".

As an aside, “observing the position of Neptune” sounds like it lies a lot closer to the “historical sciences” side of the spectrum. We can’t, like, “position Neptune at coordinates X, Y, Z” or “set the gravitational constant to be such-and-such value” in the lab. Instead, our solar system was arranged in some particular way due to historical accidents, and all we can do is gather observations and see if those observations are compatible with whichever theory we currently think most likely to be true.

AND YET! “The position of Neptune” would most clearly be predicted by Newtonian mechanics (or general relativity, or whatever future theories of gravity we might come up with), which is all under the domain of physics, which is “definitely” an experimental science, right?

So then we have an experimental science, where we are forced to used historical methodologies??? This is another reason I think the “which is better?” argument is silly, even though I think the labels “experimental science” and “historical science” are themselves useful.

I don’t think these labels are “fundamental”, but instead reflect clusterings that exist in concept-space. When you have clusters in concept space, it’s useful to have names or labels for those clusters. And correspondingly, someone handing you (good) labels helps you notice clusters in concept-space that you may have previously missed.

More pedantically, we might say they have probability P=ε of being true, where epsilon is a standard math placeholder for “some unspecified but really small value”, because maybe we’re actually mistaken about our beliefs that we’ve successfully falsified the hypothesis.