In scientific studies, p-values are basically useless

Arguably, they are worse than useless; they are actively harmful

Before we get into the p-value stuff, I have a classic problem in Bayesian reasoning that I’d like you to think about. Don’t spend too long on it — maybe just like 180 to 300 seconds — because there’s a “trick” to it.

Here’s the problem: You have a test that can detect whether or not a person has a certain rare disease. It’s a pretty good test, but it’s not perfect. If you run the test on a person and they have the disease, then 99% of the time the test will correctly report that they have the disease (and 1% of the time it incorrectly states that they do not have the disease). On the other hand, if the person doesn’t have the disease, then 95% of the time the test correctly reports that they don’t have the disease (and 5% of the time it incorrectly reports that they do have the disease). You get a volunteer and run the test on them. The test reports they have the disease. What’s the actual probability that they have the disease?

I’ll spoil the answer after this break.

Last chance to think about the problem, ‘cause I’m about the spoil it. The correct answer is “it’s impossible to know the probability.” The problem doesn’t contain enough information to find out. What’s missing is the base rate of the disease, i.e. how common the disease is.

Let’s say we’re considering a fairly common disease, where 100 out of 1000 people have it. So in the example problem given above, there’s two possibilities: Either you ran the test on someone who didn’t have the disease or you ran the test on someone who did have the disease. For a given group of 1000, there are 900 people who don’t have the disease, and 100 people who do have the disease. For the 900 people who don’t have the disease, the test will incorrectly report that they do have the disease 1% of the time (i.e. for 9 of those people). For the 100 people who do have the disease, the test will correctly report that they have the disease 95% of the time (i.e. for 95 of those people). So of the 9+95=104 people who got a positive test result, 95 of them actually have the disease. 95/104 is approximately 91%. So given the 100 out 1000 base rate we assumed earlier, if the test reports that your volunteer has the disease, there’s a 91% chance that they do in fact have the disease.

Now let’s consider a rarer disease, where only 100 in 1’000’000 people have it. For a given group of 1’000’000, there are 999’900 people who don’t have the disease, and 100 who do. Of the 999’900 who don’t have it, the test will incorrectly report that they do have it 1% of the time, leading to 9999 false positives. Of the 100 people who do have it, the test will correctly report they have it 95% of the time, leading to 95 true positives. So out of the 95+9999=10’094 people who got a positive test result, 95 of them actually have the disease. 95/10’094 is approximately 1%. So given the 100 out of 1’000’000 base rate we assumed, if the test reports your volunteer has the disease, the probability that they actually have the disease is 1%! And remember, this is for a test with a 99% true positive rate, and a 95% true negative rate!

You can fiddle around the base rate number to get pretty much any probability you want. I gave two examples, one which led to 91% and one which led to 1%. That’s why we say that there wasn’t enough information in the original problem. Depending on what the base rate is, you can get any answer at all. And again, this is despite keeping the accuracy of the test the same in all of our hypothetical scenarios.

Okay, now what does this have to do with p-values?

A simplified model of how science is performed is as follows:

You come up with a hypothesis: maybe if people are primed with words associated with elderly people like “old”, “grey”, “bingo”, etc., that will cause them to walk more slowly1.

You design an experiment to test this hypothesis against the "null hypothesis" —the hypothesis that actually, no, priming people with those words has no effect on their walking speed.

You run the experiment and record your results.

Then you perform various statistical analysis on your results, including calculating the p-value. The definition of p-value is the probability that you would have achieved results at least as strong as the ones you actually got, if the null hypothesis were true.

If your p-value is below 0.05, you're happy, you label your results as "statistically significant", and then publish your study2. If your p-value is above 0.05, you're sad, and you file your study away in some cabinet, and no one else will ever know you performed this experiment3.

So here’s the problem with p-values:

Recall that you’re performing an experiment to distinguish between two possibilities (the possibility that your hypothesis is true vs the possibility that the null hypothesis is true). The p-value (by definition) is the probably that your test would have given the results (or stronger) that it actually gave, if the null hypothesis was true.

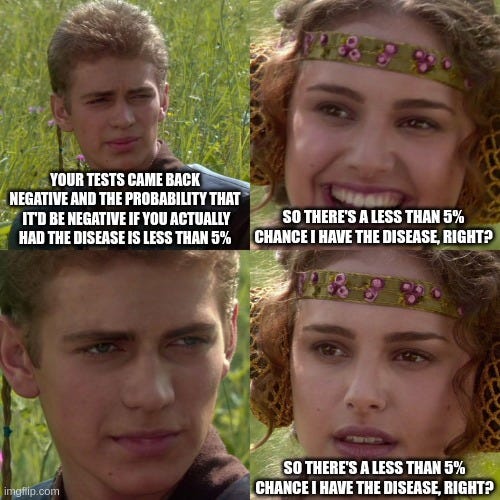

What if your hypothesis was “my volunteer has this one particular rare disease” and the null hypothesis is “actually, my volunteer doesn’t have that disease”? Then the p-value is (by definition) the probability that your test would incorrectly report that they have the disease, when in fact they don’t have the disease.

The thing we want to know is “What’s the probability that the hypothesis is true?”, or phrased in our medical example, “What’s the probability that the volunteer has the disease?” We showed earlier that we need a minimum of three values to determine this probability:

What’s the probability that the experiment would give a positive result if the hypothesis were true? I.e. what’s the probability the test would say someone has a disease if they actually have the disease? (This is called “Statistical Power”)

What’s the probability that the experiment would give a positive result if the null hypothesis were true? I.e. what’s the probability the test would say someone has a disease if they actually didn’t have the disease? (This is the “p-value”)

What’s the prior probability that the hypothesis is true? I.e. how common is this disease in the human population? (This is the “base rate” or “prior probability”)

All three values are required to calculate the thing we actually care about. Most scientific papers give you the p-value (or at least, they tell you the p-value is less than 0.05). Some scientific papers will give you the statistical power. Almost no scientific paper will give you the prior probability.4

Imagine you’re a doctor, and you only tell your patient two things: the outcome of the test, and the p-value of the test.

This isn’t just a “science communication” problem where scientists all know what p-values mean, but it gets misinterpreted by laypeople. Many professional scientists also misinterpret p-values to be a rough approximation of the thing we care about — they think if p < 0.05, that means there’s a 95% chance that the hypothesis is true5.

This means that worse than simply providing little to no information about the thing we actually care about (the probability that our hypothesis is true), p-values actively mislead not just laypeople, but professional scientists as well! A scientist could design and perform an experiment, calculate the p-value from the results, and arrive at wrong beliefs about what their own experiment shows!

This was a real study: “Automaticity of social behavior: direct effects of trait construct and stereotype-activation on action.” by Bargh, Chen, and Burrows published in 1996. For your amusement, I’ll note that they reported a p-value < 0.01, but there are other more important issues with the study that overshadow my complaint about p-values.

Where does the 0.05 p-value threshold come from? It’s completely arbitrary. One guy (Robert Fischer) decided to use that value once as a throw-away example explaining the concept of p-values, and then the scientific community just decided to keep using that value from then on. That’s pretty much as silly as reading my example problem above about the test with a 99% true positive rate, and then deciding that all tests from now on must have the same 99% rate, or else they’re “not statistically significant”.

This phenomena is “bad” and is called “publication bias”. Ideally, we want the results of all scientific experiments to be published, whether they obtain support the null-hypothesis or not. Unfortunately, the incentives are set up so that scientists are actively discouraged from publishing results that support the null-hypothesis. For example, many journals will refuse to publish such papers.

It’s infeasible, given the current culture in science, for scientific papers to give the prior probability. To do so is equivalent to the author putting down a number stating the probability of their hypothesis being true before they ran the experiment, which almost all scientists are extremely reluctant to do for fear of introducing subjectivity into their papers. So we can’t “fix p-values” by simply ensuring that we give all three values.

“Citation needed”, but of course it’s difficult (and makes you feel a bit like a bully) to give specific examples of individual scientists who have misunderstood p-values. But take a look at the article “Not Even Scientists Can Easily Explain P-values” by Christie Aschwanden at https://fivethirtyeight.com/features/not-even-scientists-can-easily-explain-p-values/ as a starting point. There are other articles that assert many (most?) scientists do no understand p-value, but again they don’t stoop to naming and shaming any individual scientists.

https://www.cambridge.org/core/journals/political-analysis/article/comments-from-the-new-editor/3BE4074AAFA13AADEFF3CF7458C9F77E

https://staffblogs.le.ac.uk/bayeswithstata/2015/10/23/banning-p-values/