When to use Microservices vs Monolithic Architecture

I’ve worked on a team that owned one big backend service, and I’ve worked on another team that owned over fifty microservices. In both cases, the team chose the correct architecture for the project. What I’m saying is that each has their use cases, and it’s not the case that one is better than the other in all cases. Nowadays, “microservice” is fashionable, and “monolithic” has negative connotations, but the reality is that monolithic is the right choice for many use cases.

The difference between the two architectural styles is more subtle than you might think. It’s not simply about the number of services you own nor the sizes of those services. For example, suppose you start with one big (monolithic) service and feel like it’s gotten too big and complex. So you split it into two or three or even a dozen smaller services. In that case, there’s a good chance you’re still using a monolithic architecture—it’s just that you’ve got twelve (or however many) services each using that monolithic architecture.

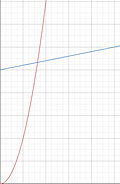

Instead, the difference is a matter of where the path of least resistance guides you. When a new requirement comes in, how do you go about implementing it? Do you make modifications to one of your existing services? Or do you spin up a brand-new service? The more likely you are to do the latter, the closer you are to the “microservices” side of the spectrum.

Spinning up a brand-new service for every new requirement probably sounds like overkill for the vast majority of use cases. And it is! That’s why for many use cases, monolithic is generally a better fit.

Microservice architecture also introduces a lot of complexity to the code. Generally, when your process calls a function that lives within that same process, you can assume the function call will succeed. But, if instead, you make a network call to another service, there are a ton of things that can go wrong: the network connection might be down; the network is up, but the service on the other side is down; the service is up, but it’s running a different version of the API than you expected; the service is up and running the correct API, but it’s currently busy or overloaded; etc.

Why take on this extra complexity? It’s the classic case in software engineering of trading one type of complexity for another. Monolithic architecture is more straightforward when the business requirements are reasonably predictable and well-understood. However, when the business requirements are essentially unpredictable and nebulous (to the point where it’s not clear what programming languages or frameworks might be the right tool for the job), microservices start making a lot more sense.

When I worked on the project where my team owned fifty-plus microservices, our situation was such that the downsides of microservices were mitigated and the opportunity for using the strengths of microservices was very present.

The project was to analyze customer behavior to detect fraud.

This is a highly nebulous requirement: When you start off, you don’t know what fraud looks like or how to detect it yet. So you brainstorm and come up with ideas. Then you start catching some of the fraudsters. But as you catch them, they realize that you’re on to them, so they change their behavior, and you’re back at square one, trying to figure out new ways to detect and catch them.

That’s the opportunity for taking advantage of the strengths of microservices. For mitigating the weakness, our services don’t have to produce their responses in real-time. It’s okay if we detect the fraudulent behavior 24 hours or even a week after it has occurred (though obviously, the sooner, the better). However, this approach would not work for a CRUD app where a user clicks on a button and expects a response page within 200 milliseconds.

This relaxing of response-time requirements means that all communication between our services can be asynchronous. In a synchronous design, if service A calls service B, then service A has to handle all the above-mentioned problems. However, in our asynchronous design, service A can simply place work items in a queue that service B pulls from and works on at its own pace. If you use a reputable cloud-hosted queue service like Amazon SQS, you can assume that it’ll “just work”. So, the only problem service A really needs to worry about is the network being down.

In fact, the way we architectured our collection of microservices was to put every service behind an SQS queue, which itself was behind an SNS topic. Our upstream dependency would publish events to one of our SNS topics, modeling customer behavior. For example, they might publish an event every time a customer buys something to one topic, every time the customer returns an item to another topic, and so on.

We might theorize that perhaps running customer purchases through an Apache Spark map-reduce system written in Scala would help us catch fraud. So we would spin up such a service, with its own SQS queue subscribed to the “customer purchases” SNS topic. Then, as events come in, our microservice runs, does its analysis, and then emits its results to another SNS topic.

If it turns out that this wasn’t an effective way to catch fraud (or if it used to be a good way, but then the fraudsters adapted their behavior, and now it is no longer a good way), that’s no problem. We can simply spin down the service, delete the SNS-to-SQS subscription, and reduce our ongoing costs to zero.

If we come up with an alternate theory, perhaps using some Python ML library, we can spin up a new service for that too.

This is where we’re really taking advantage of the strengths of microservice: Because each service is entirely independent, they can each use precisely the right set of libraries and programming languages for the task at hand. In contrast, combining Java, Kotlin, Scala, Python, Rust, etc., within the same monolithic service would be a mess.

This is also where that idea comes from, where “spinning up a new service” becomes the default tool we reach for, instead of modifying existing services.

In addition to our “analysis” services, we also had reporting services. I mentioned that the analysis services would publish their output to an SNS topic. The reporting services would subscribe to those “result” topics, aggregate them, and have an embedded HTTP server that our internal review team could use to view the activity we’ve flagged as probably fraudulent. For our newly developed analysis strategies, we’d have humans review each case and decide what action to take in response. As the analysis matured and our confidence in it grew, we’d gradually move towards automating more of that, so that we’d be able to focus more human-review time on the yet-newer analysis strategies we’ve developed since then.

This is a particular situation, though, which is why for many of the other projects I’ve worked on, monolithic architecture (possibly involving multiple small but still monolithic services) makes a lot more sense. For example, you can often just decide on a single web framework in a specific programming language and expect the entire project to be implemented in that framework. Requirements change in every project, but they usually don’t change so significantly that it’d make sense to scrap an entire service. And many projects involve live user interaction where a user clicks a button and expects an immediate response. In those situations, I would generally recommend a monolithic architecture over a microservice one.