Increasing Unit Test Code Coverage Beyond a Certain Point Correlates With More Bugs - A Statistical Argument

Many software developers believe that increasing the unit test code coverage on your software project improves the quality of the codebase (for example, by reducing the number of bugs). Often they’ll concede that there’s a diminishing return beyond a certain point. However, the reality is worse: Beyond a certain point, increasing code coverage decreases the quality of the code base.

The argument for this is actually a fully general statistical argument that applies across multiple domains. So, for example, it shows that while being tall generally correlates with being good at basketball, beyond a certain point, being too tall correlates with being worse at basketball. However, this is primarily a software development blog, and its application to code coverage and software quality is surprising to many people, so that’s the application we’ll focus on for this article.

The takeaway argument I want to present is that going for 100% code coverage is actively harmful to your project’s quality. It increases the number of bugs and decreases the quality of the codebase.

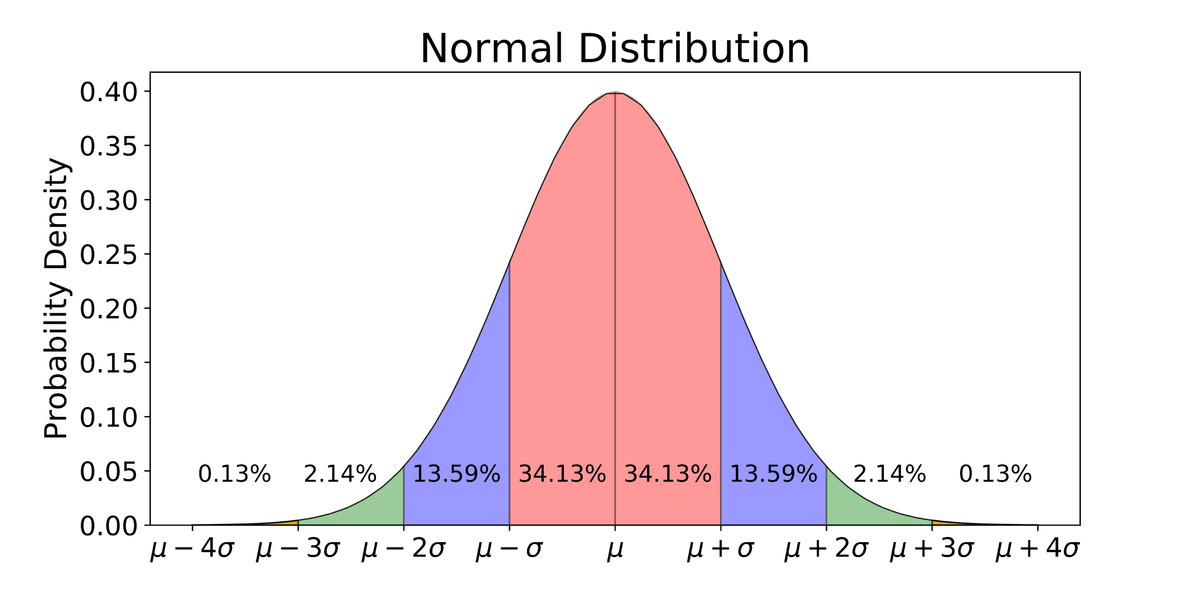

Let’s accept as a first axiom that code coverage is normally distributed. That is to say: there are a few projects that have no code coverage, there are a lot that have a medium amount of code coverage, and a few that have 100% code coverage. Similarly, the codebase quality (or, if you prefer something a little easier to measure objectively, the number of bugs) is also normally distributed.

If you plot code coverage against software quality, you can then measure some value for the correlation present in the data. There are a couple of possibilities here of what the correlation might end up being.

One possibility is that the correlation is negative: The more code coverage you have, the lower the quality of the project. Of course, almost no one believes this to be true, but if you’re one of the people who believes this to be true, hopefully, you’ll agree with my conclusion: that we should not aim for 100% code coverage.

Another possibility is that there is zero correlation. That is, increasing code coverage has no effect on the quality of the codebase. Again, few people believe this to be true. If you happen to believe it to be true, again hopefully you’re already convinced that 100% code coverage is useless busywork at best and should not be your team’s goal.

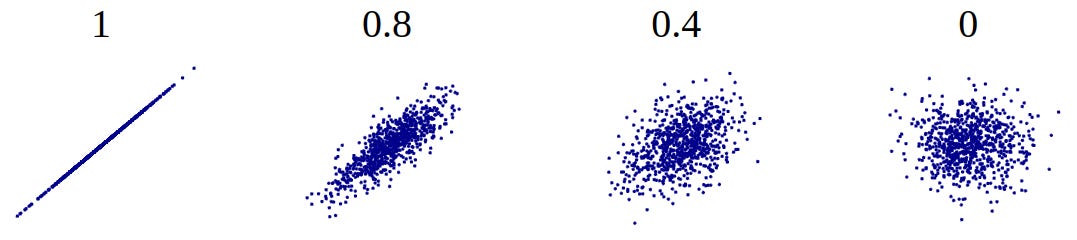

Finally, there’s the possibility that the correlation is positive. This is what most people believe. Here we’re saying that generally, as you increase code coverage, you also increase code quality. Almost no one believes that this is a perfect correlation (i.e., a correlation of 1), because that would mean it’s literally impossible to increase code quality without also increasing code coverage and vice versa.

So most people believe that code coverage positively correlates with code quality, with a correlation somewhere between 0 and 1, not inclusive.

This is what a plot of data that is positively correlated looks like:

Note that the plot for a correlation of 0 looks like a probability cloud roughly in the shape of a circle. As the correlation grows towards one, the probability cloud becomes more ellipse-like, with the major axis along the diagonal. The ellipse becomes thinner and thinner until you finally reach a correlation of 1, at which point the “ellipse” becomes a line.

Suppose you’re somewhere near the middle of the ellipse. Then yes, in that case, as you increase your value along one axis (e.g., if you increase code coverage), you tend to also increase along the other axis (i.e., you increase software quality).

However, the fact that the plot roughly approximates the shape of an ellipse means that once you get to the top-right extreme, the correlation flips.

In this corner of the diagram, as you increase along one axis, you tend to decrease along the other axis.

This is a purely statistical argument and doesn’t rely on the specifics of software development practices. It applies equally in almost every domain where you have two variables that correlate positively, but not perfectly. I.e., any time you get this “ellipse-shaped” probability cloud.

Now the million-dollar question is: At what point does this inversion happen? Should we target 80% code coverage? 60%?

Unfortunately, the reality is that there isn’t a single number one can give out that is the “ultimate code coverage” goal that every team should aim for. The right level of code coverage depends on several factors, including what proportion of your codebase is “glue code” vs. “business logic” and on the type and quality of the tests your team is writing (you can easily get 100% code coverage that doesn’t correlate very much at all with software quality by writing mostly smoke tests).

This is a deep and complex topic that I will continue to cover across multiple articles.